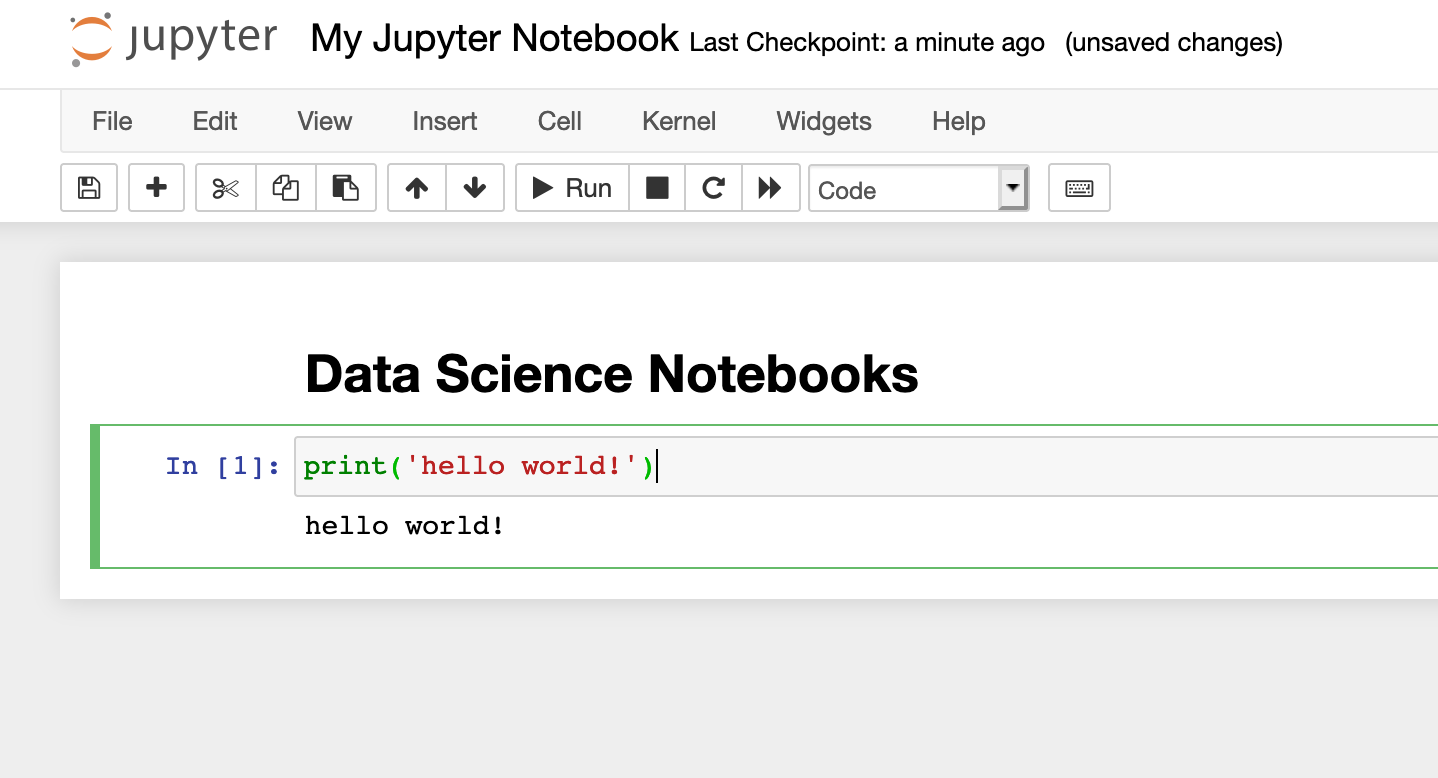

Jupyter

Comparing two data science notebooks.

Notebooks are a core part of how modern data teams explore data, build models, and communicate results. Jupyter Notebook has been a standard choice: flexible, open source, and runnable almost anywhere. Deepnote has introduced an open-source Jupyter alternative with an AI-first design, modern UI, new blocks, and native data integrations.

Typically, people evaluating the current Jupyter versus Deepnote setup are deciding between two workflow models: a self-managed notebook stack or a collaborative notebook platform with built-in sharing, integrations, environment management, and the ability to build and run AI agents.

That is the real comparison in this article. Jupyter remains a good choice for individual work and for organizations willing to run JupyterHub or similar infrastructure themselves. Deepnote is built for teams that want to collaborate between humans and AI agents, and move beyond quick exploration to analysis to shareable outputs in one workspace.

This guide compares Jupyter and Deepnote across data connectivity, collaboration, AI capabilities, environment management, portability, and pricing.

Here, “Jupyter” refers to the broader Jupyter stack, not just the legacy Notebook interface. In practice, the comparison is less about classic Notebook versus Deepnote and more about a self-managed Jupyter workflow versus a collaborative notebook platform.

Before diving deeper, the table below summarizes the most important differences between the two platforms.

| Category | Jupyter Notebook / JupyterLab | Deepnote |

|---|---|---|

| Primary use case | Individual analysis and research | Collaborative analytics and team workflows |

| Deployment | Self hosted locally or in the cloud | Managed cloud platform |

| Data connectivity | Manual, library-based, environment-specific | Native integrations, shared project setup, SQL and Python in one workspace |

| Collaboration | Requires infrastructure or extensions | Built-in real time collaboration and project permissions |

| AI features | Available via extensions such as Jupyter AI | Deepnote Agent with full workspace context and custom AI models (i.e., bring your own key) |

| Reuse | Python modules, packages, Git workflows | Modules, semantic-layer patterns, multi-notebook projects, git workflows, GitHub, and GitLab |

| Sharing results | Export notebooks or static outputs | Share links, publish apps, interactive dashboards |

| Pricing | Free open source software | Free tier plus paid team and enterprise plans |

| Portability | Native .ipynb format | .ipynb, .deepnote, and open conversion tooling across notebook format |

In practice, both tools can support similar analytical workflows. The difference usually comes down to how teams collaborate, share work, and manage infrastructure.

Jupyter

Jupyter's open-source ecosystem is mature and widely adopted. The core components (Notebook, JupyterLab, JupyterHub, Jupyter Server) are all open source, and the .ipynb format is the most widely used notebook standard in data science.

You can run Jupyter on a laptop, inside Docker, on Kubernetes, or through hosted deployments. That flexibility means organizations also manage their own infrastructure, authentication, and collaboration workflows.

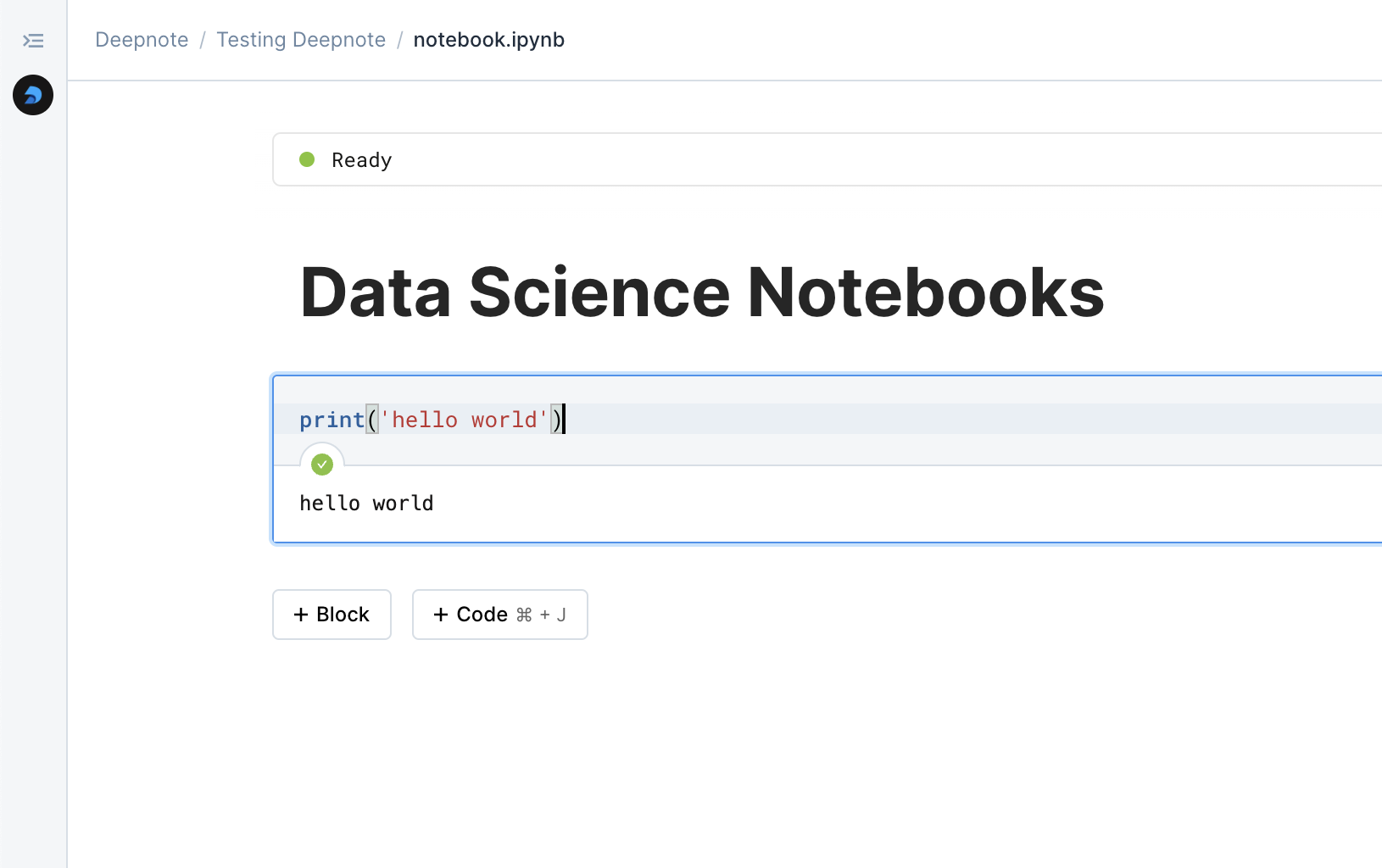

Deepnote

Deepnote is open source under the Apache 2.0 license. The open-source layer includes the .deepnote format, conversion tooling, block type definitions, and IDE extensions.

This means you can work with Deepnote notebooks locally in your preferred editor without depending on the cloud platform. When you need real-time collaboration, managed compute, or the Deepnote Agent, you can move the same project into Deepnote Cloud.

The practical result is that Deepnote is not a pure SaaS product with a proprietary format. The local-first workflow and open format reduce the lock-in concern that comparison shoppers often have when evaluating managed platforms.

If you are comparing notebook platforms for real work, data connectivity should be near the top of the list. Most notebook pain is not about writing Python. It is about getting connected to the right data, keeping those connections stable, and combining SQL and Python cleanly enough that the project remains usable a month later.

Data connectivity in Jupyter

Jupyter gives users a flexible way to connect to data, but those connections usually live at the notebook level or the environment level rather than inside a shared product workflow. Teams rely on Python libraries, custom authentication setup, environment variables, and separate SQL tooling. That works well for individual users or teams with strong infrastructure support, but creates setup overhead and configuration drift across collaborators.

Data connectivity in Deepnote

Deepnote puts data connectivity much closer to the center of the product. Its includes 100+ data source integrations, support for working with Python and SQL in the same notebook, shared project-scoped setup, reusable modules, and a semantic-layer approach for trusted definitions and metrics. That changes the feel of the platform. For teams that constantly move between warehouse queries, notebook analysis, and stakeholder-facing outputs, this removes repetitive setup work and reduces mismatches between collaborators' runs.

It helps to frame these products correctly before getting into feature-by-feature comparisons.

How Jupyter is structured

Jupyter is not a single application. It is a complex stack: Jupyter Notebook (original interface), JupyterLab (multi-panel development environment), Jupyter Server (execution engine), and JupyterHub (multi-user deployment). Notebooks are stored in .ipynb format. You can deploy Jupyter almost anywhere, but you manage how it is deployed, secured, configured, and shared.

The core components include:

You can run the above stack almost anywhere, but you are also responsible for its deployment, security, configuration, and sharing.

The notebooks themselves are stored in the .ipynb format, which has become a widely adopted standard for interactive data analysis.

Because the platform is open source, teams can deploy Jupyter almost anywhere. It runs locally on a laptop, inside Docker containers, on Kubernetes clusters, or through hosted environments such as JupyterHub deployments.

This flexibility is one of Jupyter’s greatest strengths, but it also means organizations often need to manage their own infrastructure and collaboration workflows.

How Deepnote is structured

Deepnote is a project-centric workspace. Where Jupyter treats each notebook as an isolated file, Deepnote groups multiple notebooks, shared integrations, environment settings, and permissions into a single project. Users can edit notebooks together in real time, see collaborator cursors, leave comments on cells, and run analysis in a shared execution environment.

Deepnote also integrates capabilities that teams often add manually to Jupyter setups: AI-assisted code generation, built-in database integrations, app publishing from notebooks, and workspace-level permissions and audit history.

Users can:

This makes notebook collaboration feel closer to tools like Google Docs.

Deepnote also integrates capabilities that teams often add manually to Jupyter setups, such as:

The most visible difference between Jupyter and Deepnote appears when multiple people work on the same analysis.

Collaboration in Jupyter

Jupyter notebooks were originally designed for single-user workflows. Collaboration typically happens through file sharing or version control.

Common approaches include:

.ipynb files to Git repositoriesPeople pass .ipynb notebooks around, commit them to Git, or deploy JupyterHub when they need multiple users on shared infrastructure. Modern versions of JupyterLab support limited real-time collaboration, although it is also not collaborative by default, requires a shared environment, and typically needs Jupyter Server 2 with the collaborative flag enabled.

For teams that already maintain custom infrastructure, this flexibility can be powerful. However, it also means teams must manage authentication, environments, and compute resources themselves.

Collaboration in Deepnote

Deepnote was designed around collaborative analytics workflows from the start. Multiple people can edit the same notebook live, share the same execution context, comment on blocks, resolve threads, and manage project-level access. This removes common friction points: environment mismatches, dependency inconsistencies, and difficulty reproducing results.

The publishing model is also different. In Jupyter, turning a notebook into a stakeholder-facing artifact usually means exporting it or adding a separate framework (Voila, Streamlit, Dash). Deepnote includes publishing inside the product through data apps, input blocks, and shareable project or app permissions. It can also share or embed individual notebook blocks, which is useful when a full notebook or full app would be more than needed.

AI-assisted development is rapidly becoming part of modern data workflows. Both Jupyter and Deepnote can support agentic data analysis, but the integration approaches differ significantly.

AI in the Jupyter ecosystem

In the Jupyter ecosystem, AI is usually extension-based. Tools such as Jupyter AI can bring model access into notebooks, and Jupyter AI supports a wide range of providers and custom provider patterns. That flexibility fits the broader Jupyter philosophy, but it also means the AI layer is something you install and configure. It is not a default part of the notebook product.

Because Jupyter is highly extensible, analysts can also call model APIs directly using Python libraries. This allows full control over AI workflows but requires additional setup and configuration.

AI in Deepnote

Deepnote treats AI as a native collaborator. The Deepnote Agent can generate Python or SQL, create visualizations from natural language, and explain code and transformations, all with awareness of the notebook workspace including existing code, datasets, and integrations. Changes stay reviewable inside the team workflow rather than living in a disconnected side tool.

The Deepnote Agent can help:

Deepnote Agent operates with full awareness of the notebook workspace, which allows it to reference existing code and datasets when generating suggestions.

For organizations with stricter governance needs, Deepnote also supports custom AI models on Enterprise. That lets teams connect their own OpenAI-compatible endpoints and control which provider serves AI requests inside the workspace. If you care about model choice, procurement, or internal AI routing, that is a meaningful differentiator.

Reuse in Jupyter

One of Jupyter’s quieter weaknesses in larger projects is that notebooks often become isolated units. Reuse is absolutely possible, but it usually means copying cells, importing Python modules from elsewhere, or maintaining a package structure outside the notebook flow. That is workable, but it can become messy if a team wants both narrative notebooks and shared logic in the same environment.

Reuse in Deepnote

Deepnote takes a more opinionated approach to reuse through Modules. Modules let teams package code, SQL queries, data transformations, and other shared logic into standardized components that can be imported across notebooks in a workspace. Teams can add parameters through input blocks, publish modules into a shared library, and update the source notebook so changes propagate to every notebook that uses the module.

This is especially useful for enterprise teams that need consistent KPI definitions, reusable transformation logic, and a cleaner path from one-off analysis to standardized team workflows.

Managing dependencies and environments is another area where notebook workflows can diverge.

Environment management in Jupyter

Jupyter gives you complete freedom over environments: Python, Conda, Docker, kernels, and system packages. If you need full control over the runtime, that flexibility is difficult to match. But it also means environment drift, package mismatches, and setup variance are your problem to solve, especially across multiple people or machines. This often gets you into 'it works on my machine' conversations with your team.

Environment management in Deepnote

Deepnote provides managed environments where dependencies can be installed using pip or conda, with more advanced setup through custom images and initialization scripts. Projects can define environment configurations as well as store secrets to ensure reproducibility across collaborators.

Jupyter

Jupyter is primarily an authoring environment. Turning a notebook into a shareable application typically means adding a separate framework (Voila, Streamlit, Dash) and deploying it yourself.

Deepnote

Deepnote can publish data apps directly from a project: lightweight UIs with input blocks and interactive outputs, shared with link-based access controls. The app and the underlying analysis live in the same project, using the same data integrations and environment.

Beyond apps, Deepnote also supports scheduled notebook runs (parameterized jobs with notifications) and, as the format evolves, the ability to promote deterministic blocks to HTTP endpoints for lightweight inferencing.

Reactive execution in Jupyter

Jupyter uses a manual execution model. You run cells individually or sequentially, and there is no built-in mechanism to track which cells depend on which variables or outputs. If an upstream cell changes, downstream cells do not automatically update.

Reactive execution in Deepnote

Deepnote supports reactive notebook execution. When inputs or data change, dependent blocks automatically re-run. This keeps notebooks consistent and reproducible without requiring manual re-execution of the full chain. For workflows built around parameterized analysis or interactive inputs, reactive execution reduces a common source of stale-output errors.

One advantage of the notebook ecosystem is the .ipynb file format, which allows notebooks to move between tools. Jupyter still owns the most widely adopted notebook format in the ecosystem. The .ipynb file is the standard most people mean when they talk about notebooks, and tools like nbconvert make it easy to export notebooks into HTML, PDF (via our free IPYNB to PDF converter), Markdown, and other formats. That ubiquity still matters a lot.

Moving from Jupyter to Deepnote

Deepnote’s portability story goes beyond simple .ipynb export. In addition to standard notebook compatibility, it uses a human-readable .deepnote YAML project format and supports conversion across Jupyter, Quarto, percent-style Python notebooks, and marimo. That makes the platform more portable than a typical hosted notebook product, while still preserving compatibility with the wider notebook ecosystem.

That means Deepnote is not only compatible with the standard notebook format, but is also investing in a broader open conversion layer around notebooks as portable artifacts.

Its open project format and conversion tooling reflect why Deepnote built a new notebook format: a notebook structure designed for collaboration, project context, and AI-assisted workflows rather than just single-file execution.

Exporting from Deepnote to Jupyter

Deepnote projects can be exported as archives containing .ipynb files and project assets.

Most standard notebook cells convert directly. However, some Deepnote specific features such as input blocks or apps may require adjustment when exporting to plain Jupyter environments.

Jupyter pricing and operating model

Jupyter is free open-source software. Once you move beyond solo local use, the real cost is infrastructure, storage, authentication, security, upgrades, and the engineering time to keep a shared deployment running.

Deepnote pricing and operating model

Deepnote follows a SaaS model for its cloud platform. The current pricing includes a Free plan (up to 3 editors, 5 projects), a Team plan ($39 per editor/month, billed yearly), and an Enterprise plan (SSO, audit logs, private images, single-tenancy, BYO LLM). Deepnote offers unlimited viewers across all plans, which matters if your work is consumed by stakeholders who are not notebook editors.

The open-source tooling (format, conversion, and IDE extensions) is free regardless of plan.

Jupyter's open architecture, extensibility, and portability make it a natural fit for individual developers, researchers, and advanced users who want full control over where notebooks run and how the environment is configured. It is also a reasonable fit for organizations that already have dedicated platform headcount and are willing to operate JupyterHub or another multi-user deployment.

Deepnote is designed for teams that want to reduce the operational overhead of managing notebook infrastructure while making collaboration, sharing, and review easier. The platform is particularly useful when teams regularly need to share notebooks with stakeholders, publish analysis as interactive applications, or maintain consistent workflows across collaborators.

In practice, the choice comes down to workflow. Jupyter works well when flexibility and infrastructure control matter most. Deepnote is the better option for teams needing an agentic, collaborative notebook environment that makes it easier to move from analysis to review to delivery in one place, with the flexibility to work locally when needed.