Integrated file system

Deepnote has an integrated file system and native integrations to help you work with data files.

How to upload data files to Deepnote

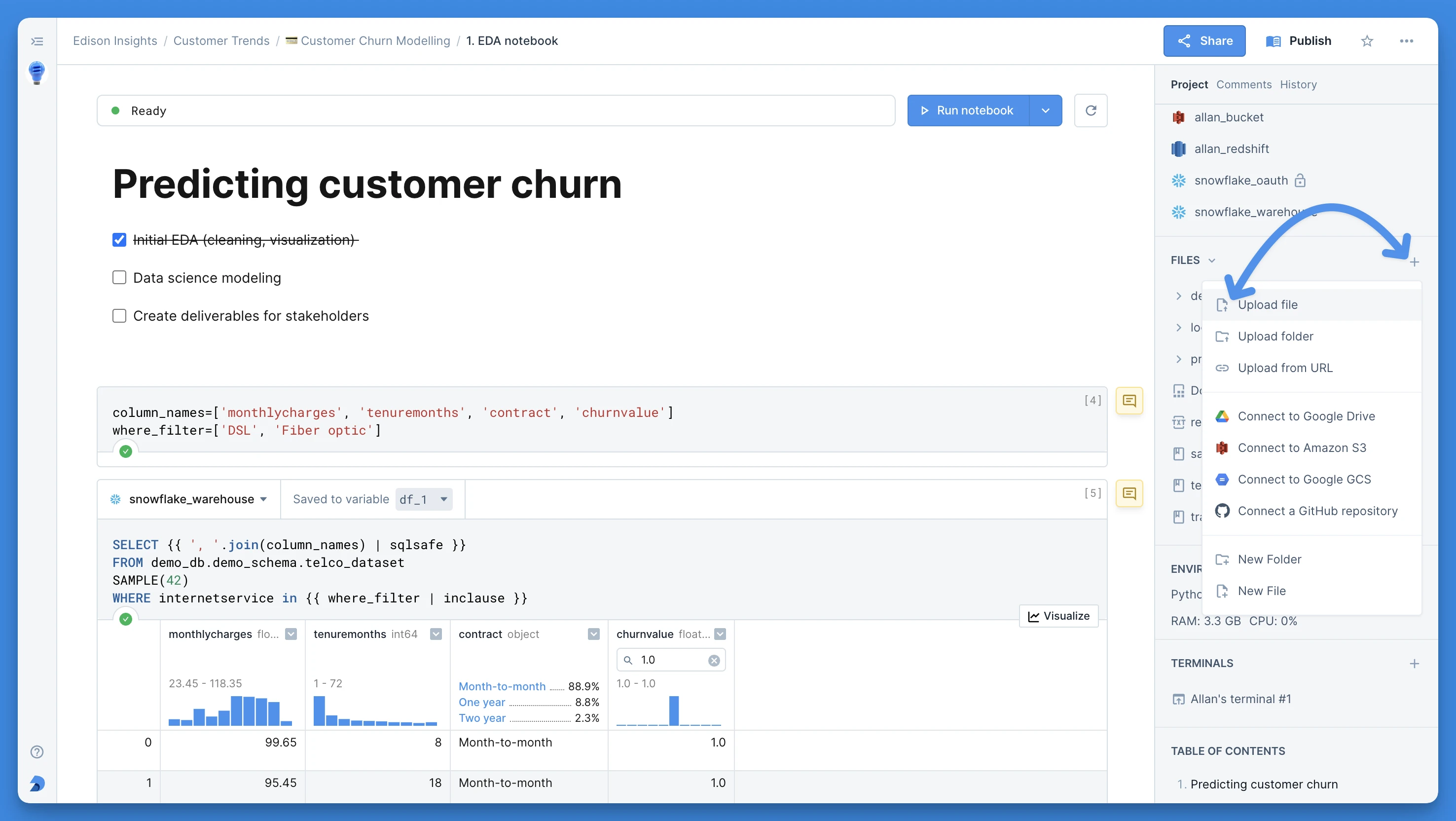

You can upload files into the integrated file system by dragging them from your computer into the FILES section in the right sidebar. You can also click the + button to upload files from your computer or from a URL.

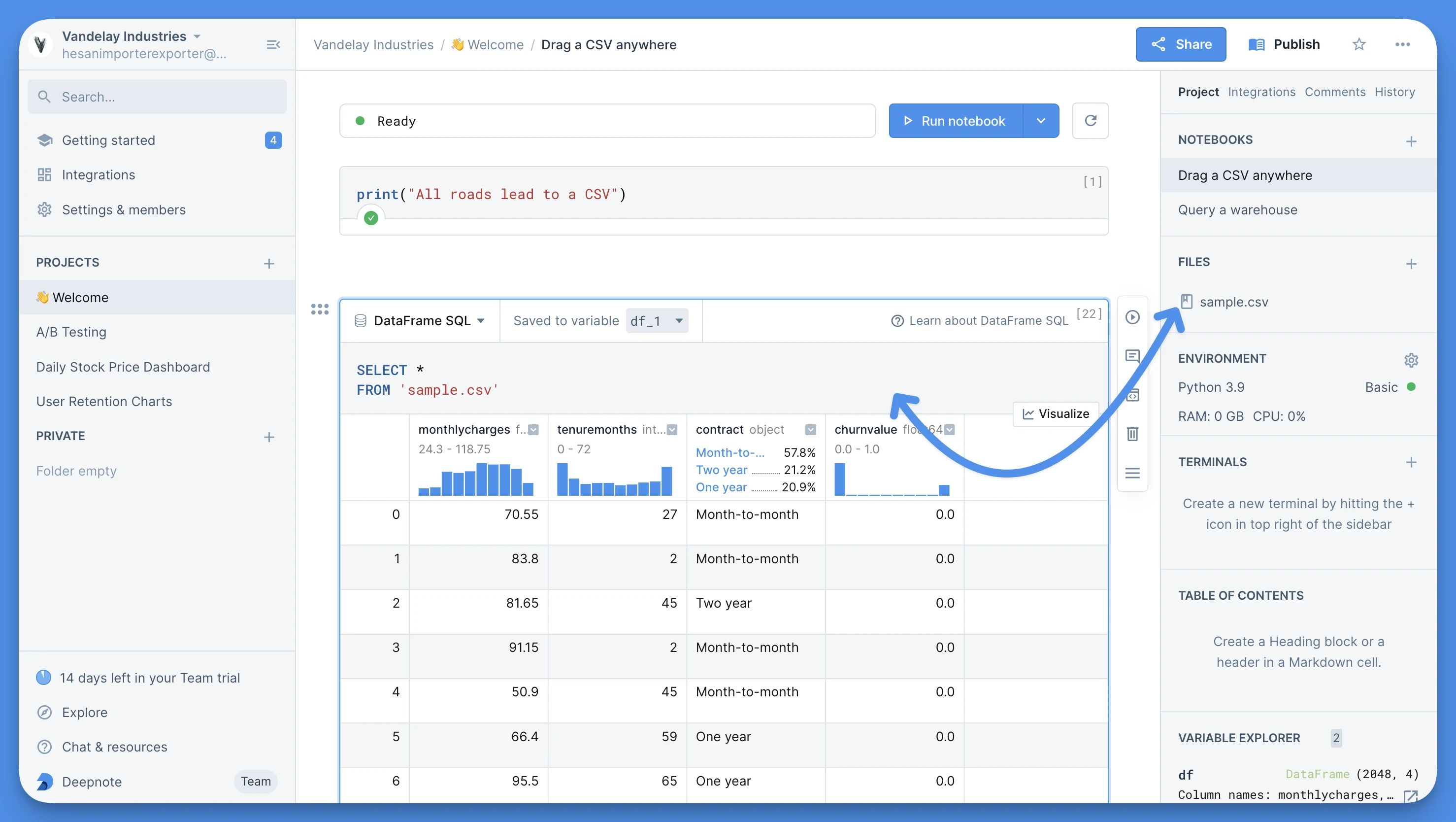

Pro tip: If you drag a CSV file into the notebook (either from your computer or from the file system), an SQL block with a prepopulated query will be created for you.

Remote storage

If your files live in a remote service or bucket, you can simply use one of the native Deepnote integrations to access the files. We have integrations with Amazon S3, Google Cloud Storage, Google Drive, Google Sheets, Microsoft OneDrive, Box, Dropbox, and Azure Blob Storage.

High performance file system

The default working directory (/work) is backed by object storage and it is not expected to provide high enough throughput required for working with large amount of smaller files.

If you require high throughput access to your data (i.e. training of ML models using a GPU or unzipping a file) you can switch to a fast ephemeral storage available in /tmp. It is recommended to first copy the necessary files from the integrated storage (/work) to the ephemeral storage of the project (/tmp/subdirectory) before executing the notebook.

Tip: You can do this on project initialization by adding a copy statement in the init notebook of your project:

!cp -fr /work/<data> /tmp

Please note that any files stored in the fast ephemeral storage will be lost when the machine restarts. Don't forget to copy them back to the integrated storage if you want to persist them between restarts.